Testing

Related Pages

Table of Content

Overview

This page describes the different strategies and expectations related to the testing of DDF.

Draft

The content of this page is still being worked.

Definitions

End to End Tests

End to end tests refer to tests that exercise the entirety of a DDF distribution, with a focus on the standard DDF configurations that would be used in operational environments. The goal of those tests is to guarantee that a properly installed and configured distribution of DDF will work as expected. End to end tests run in a container (Karaf) installed and configured the same way a customer would use the distribution. Real dependencies such as LDAP, IDP, SolrCloud, etc., should be used. End-to-end tests can vary from gray-box tests using a tool like PaxExam, to black-box tests using tools like Citrus or SoapUI.

As of today, DDF end-to-end tests are written using Pax Exam and can be found here.

Component Tests

Component tests (a.k.a. Service tests) focus on a specific DDF component or service. Their basic goals are to:

- Ensure that the component's API behaves as expected and respects the contract it defines.

- Ascertain that the component's configuration files (e.g., blueprint, feature files, etc.) work as expected when deployed in an OSGi container.

- Guarantee that there are no improper dependencies or coupling between logical components or services.

- Verify that the component behaves as expected when one of its dependency fails.

- Validate the component's interactions with its dependencies, i.e., appropriate calls are made and proper data is exchanged.

As noted above, external dependencies are usually mocked or stubbed so that external failures can be properly tested. For instance, the Catalog Framework and other dependencies such the FilterBuilder could be mocked to test the REST endpoint. If a real dependency is used for component testing, care should still be taken to guarantee that the two components are properly decoupled and that the inclusion of the real dependency does not slow down the tests too much.

Cross-cutting concerns, such as security, should be included in a component test when their presence can affect the behavior of the component itself. For instance, if a component's responsibility is to configure security, then it may make sense to include security as part of the test. Otherwise, the above guidelines apply here as well.

For simple components that only have one or two classes (e.g., plugins), component tests may be used as a substitute to unit tests.

Unit Tests

Unit tests usually focus on a single class. The goal of a unit test is to ensure that the class under test respects its contract and behaves as expected under all possible scenarios, including invalid inputs, dependency failures, exception, etc. Unit tests use a simple runner such as JUnit or Spock and mock or stub their dependencies using Mockito or Spock to test all possible failures scenarios.

Like component tests, a class' external dependencies are usually mocked out so that downstream failures can be properly tested. There are however cases when using a real dependency is acceptable. However, that approach should be the exception rather than the rule and should not cause the tests to take longer to run and go against any of the Unit Testing BestPractices.

Test Types Comparison

Unit | Component | Integration/end-to-end | |

|---|---|---|---|

Type | White box | Gray box | Black box |

Scope | Single class | Multiple classes making up a service or components; usually contained in a single maven module | Application as a whole |

Coverage | All methods, input/output combinations, conditionals, exceptions, etc.; aim for 100% | Service or component external interfaces, including input/output and errors combinations | Application installation, configurations and deployments; interactions with other applications and external systems |

Mocking | All external classes | External components and services, but usually not embedded libraries | External systems not part of the code base |

Time to run | Seconds | Few minutes | Minutes to hours |

Frequency | Every build; when class changed | Every build; when component/service or one of its dependencies changed | Nightly to a few times a day - unless there's a way to know what tests to run based on the changes made |

Strategy

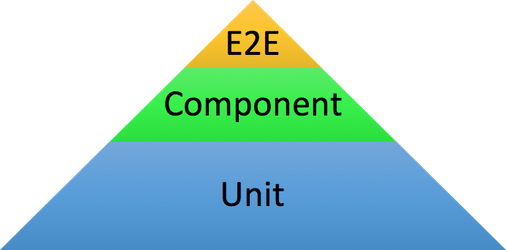

The overall strategy is to achieve a better balance between end to end, component and unit tests. Over time, the distribution of tests should shift towards the test pyramid, with 70% unit tests, 20% component tests and 10% end to end tests.

The Test Pyramid

Pushing tests down the pyramid has multiple advantages.

- The lower in the pyramid a test is, the easier it is to troubleshoot.

- Tests at the bottom of the pyramid are faster to run and therefore help catch problems sooner.

- Dividing tests in "layers" makes it possible to leverage build dependencies and tests only the parts of the software that were affected by a code change. This helps minimize the number of tests executed while still providing a high level of confidence in the change.

For instance, changing a class in the catalog would trigger the unit tests related to that class, the catalog component tests to guarantee that the API's contract was not affected by the change, and finally the end to end tests to ensure that the changes didn't affect the installation and overall configuration and execution of the distribution.

A major side-effect of the above is to reduce the overall build time without compromising code quality.

From a coverage perspective, unit tests should focus on depth (high coverage), component tests should focus on contract validation and how dependency failures are handled, and end to end tests should put the emphasis on breadth rather than depth, making sure that the system can be installed and the main use cases are functional.

A few important things to remember when considering where tests should be added:

- Even though unit tests are usually easier to write, faster to run, provide better coverage, and make it easier to isolate a problem, they are also the most brittle and the most likely to change as they are closely tied to the component's design and class structure. Applying good object-oriented practices can help mitigate this issue (see Object-Oriented Programming References).

- Even though component and service tests are a great way to get quick coverage on a bigger area of the code, it is important to keep their goal in mind, i.e., validating the external contract the component has with other components, as well as ensuring it respects other components' external contract when calling out to them. Their goal is not to achieve high internal code coverage.

- End to end tests are the most difficult to implement and the slowest to run and as such should be kept to a minimum. If a test can be added to the end to end suite or the component suite, it should be added to the latter.

New Components and Services

For new components and services, the pyramid strategy should be followed. Unit tests form the base and account for the greatest amount of testing. The proper component tests are added to validate APIs and interactions with other components and services. Finally, any new end to end tests added to verify that the component is functional inside the distribution.

Existing Components and Services

For existing components and services, a different strategy may be needed.

If the component already meets the code coverage standards, the proper component tests should simply be added. Existing end to end tests that cover the component should be reviewed and converted to component tests as needed.

If the component has very few unit tests and is mostly tested using end to end tests, it might be easier to start by converting those end to end tests to component tests. This provides a safety net which makes it easier to refactor the existing code when writing new unit tests without too much risk of impacting the rest of the code that has a dependency on the component under test.

A similar strategy can be used if a component already has some unit tests but requires refactoring in order to increase unit test coverage.

Existing coupling between components and feature files can make writing good component tests difficult, if not impossible. Cleaning up feature files and component dependencies should only be considered if the impact is well understood and does not introduce too much risk. Otherwise, component testing should be postponed until those issues have been properly addressed.

References

Testing

- http://www.testingexcellence.com/agile-test-strategy-example-template/

- https://testing.googleblog.com/2015/04/just-say-no-to-more-end-to-end-tests.html

Object-Oriented Programming

- http://www.oodesign.com/

- http://butunclebob.com/ArticleS.UncleBob.PrinciplesOfOod

- https://en.wikipedia.org/wiki/SOLID_(object-oriented_design)

- https://en.wikipedia.org/wiki/GRASP_(object-oriented_design)

- https://en.wikipedia.org/wiki/Composition_over_inheritance